Feel free to watch promotion video to understand more about it.

AIAuditors is a team project partnering with Carnegie Mellon HCII (Human-Computer Interaction Institute) to aid in the research on fairness in Machine Learning (ML) by developing a Game With A Purpose (GWAP).

The game aims to allow players to have fun while generating usable data to help researchers improve Artificial Intelligence (AI) models.

General info

This is a semester (14 weeks) long project during my Master’s degree at Carnegie Mellon University (CMU). In order to achieve the objective mentioned above, we went through many brainstorming session and decided to use a text-based toxicity model from HuggingFace as the base AI model to focus the game on. This model will accept a text input and generates a toxicity score based on what the model thinks. A score of 1.0 means that it is absolutely toxic and a score of 0.0 means that it is not toxic at all. As such, the game will focus on detecting harmful biases made by the AI system through the player’s input and AI model’s output.

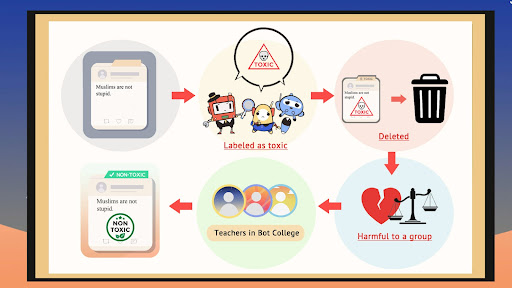

In order to make this more relatable to the players, we have also set the context where AI models are robots moderating the content online while the players are positioned as teachers to teach the AI bots to not make such harmful biases. For any input text, if the AI model generates a score of 0.4 and above, it means that the AI bots think that it is toxic and it will censor it from the public.

For example, if a player enters “Blacks fight prejudice all the time” and the model returns a toxicity score of 0.95, it means that the AI model thinks that this is highly toxic. However, this is not only a wrong judgement made by the AI, it is also a harmful bias made against the blacks as the AI bots will remove such statements from the public. However, such statements are harmless and deleting them will be harmful to the Black community.

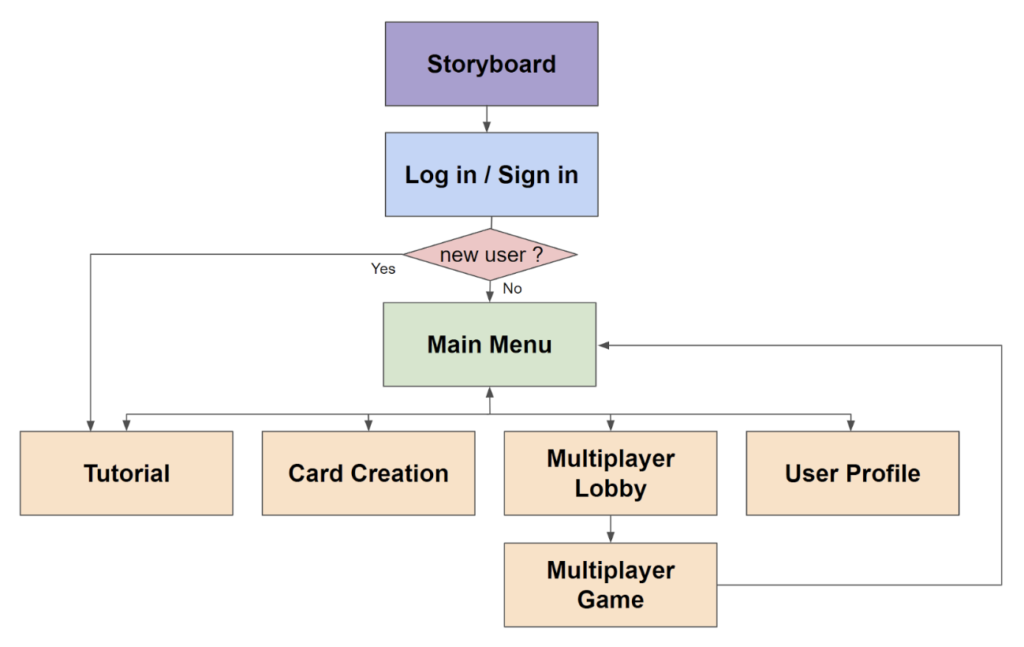

Due to the complexity of the concept and gameplay, we have created multiple components to explain the concept and game to ensure that the players are able to generate useful data in terms of biasness made in AI models. The image above shows the general workflow a player will experience based on each component, namely storyboard, login, main menu, tutorial, card creation, multiplayer and user profile.

With that said, you can try out the final product created using this playable link to experience it yourself. I will try my best to further elaborate on each component in the sections below

Introduction to the game

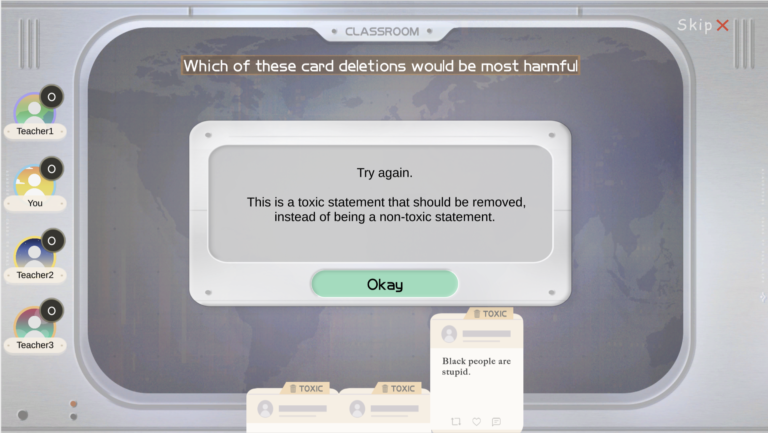

There are already 3 components planned for the player when they first started playing. The first component is the storyboard where we set the context of the entire game to give players a preview of what to expect. The second component is an interactive tutorial of the concept where we explain to the player what is a mistake and what is an actual bias made by the AI model through a series of example statements. Lastly, we created an interactive tutorial of the multiplayer aspect to explain to players what they should do to outsmart other players and win the game.

Playing the game

After going through the tutorial, the player will be lead to the main menu page. Here, they can choose to replay the tutorial to understand the concepts better or they can move on to the card creation aspect or start playing with others.

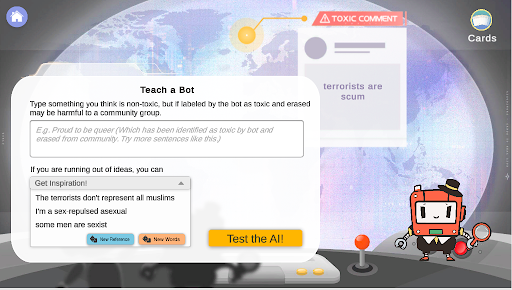

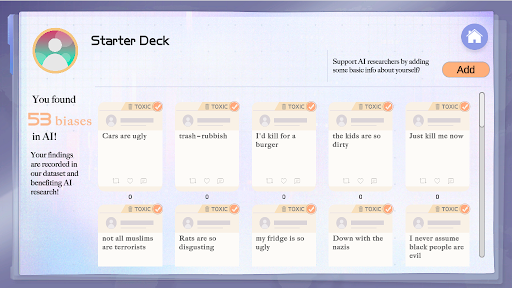

The card creation stage is where players will come up with statements on their own which they think are non-toxic but the AI model may think they are toxic. In order to facilitate this better, we have added multiple mechanisms such as “New References” and “New Words” to help inspire the players to come up with some such statements.

Finally, the player has the option to start playing with others. This will be the key to data collection as players will use the cards they created previously to play with each other. Each round, players will vote on the card (other than their own) which they believe is the most biased. These data will be saved automatically to the database. As such, through multiple gameplays, the AI researchers can deduce certain statements that lead to AI models generating biased data and eventually, fixing those issues. We have also created a sample deck for players to start playing with.

User and card management

On top of the actual gameplay, we have also created user and deck management mechanism where players can choose what cards to bring, fill up more information about themselves and level up based on the number of votes they received etc.

Technology used for the project

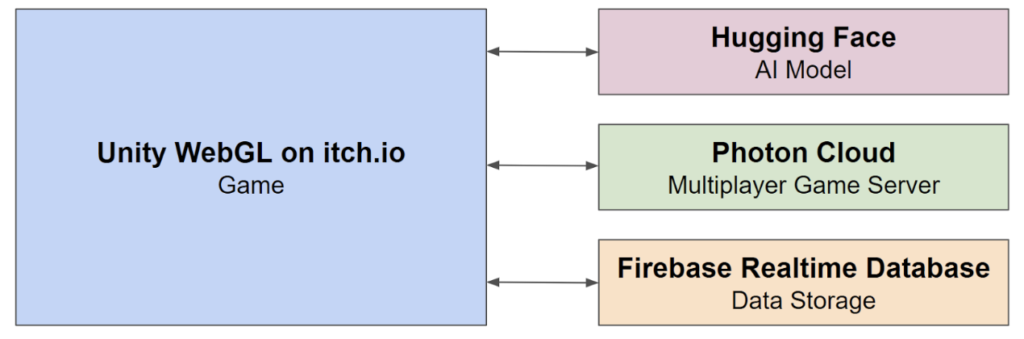

This game is created using Unity WebGL for the front end web gameplay, C# for the backend scripts, Hugging Face to generate AI model results, Photon for the multiplayer aspect, Google Firebase Realtime Database for data storage and finally itch.io as a hosting platform for the game. You can find more details here.

My contribution to the project

I was the producer leading the team as well as one of the two programmers on the team.

As a producer, I was in charge of leading the team to creating a final product meeting the timeline set by me and the requirements set by our clients. I also communicated internally and externally by hosted meetings, conducting brainstorm sessions, playtests, asking other instructors for office hours etc. Finally, I allocated work to each member according to their strengths while overcoming challenges we faced along the way.

As a programmer, I was in charge of creating multiple components of the game such as the storyboard, login/signup system, main menu, tutorial, card creation, user profile and deck management.

Learnings and dicussions

This is the first time I have heard of the term GWAP, it is even more interesting and challenging when being applied to the context of AI/ML. We were faced with multiple challenges along the way. Through our final playtesting, we received very positive feedback on how we managed to embed such a difficult concept into a working game that makes players feel motivated to want to help the AI researchers to generate useful data by playing the game. Finally, we have created a combined post-mortem document of the project here.